The ethical risks of automated captioning are increasingly relevant as universities and public institutions consider replacing trained CART captioning services with artificial intelligence based speech recognition tools. While automated systems promise lower costs and scalability, research consistently shows significant gaps in real time caption accuracy, equity, and accessibility compliance. In high stakes academic and legal environments, transcription errors are not minor inconveniences. They can affect comprehension, grades, due process, and equal access. Institutions must evaluate not only financial efficiency but also disability accommodation obligations, privacy risks, and the documented problem of speech recognition bias. In many contexts, replacing trained human captioners with automation raises serious ethical and legal concerns.

Overview of Automation in Captioning

Automated speech recognition, or ASR, has improved substantially over the past decade. Major technology companies promote AI generated captions as fast, scalable, and increasingly accurate. In low risk contexts such as informal meetings or recorded content, automated captions can offer general accessibility support.

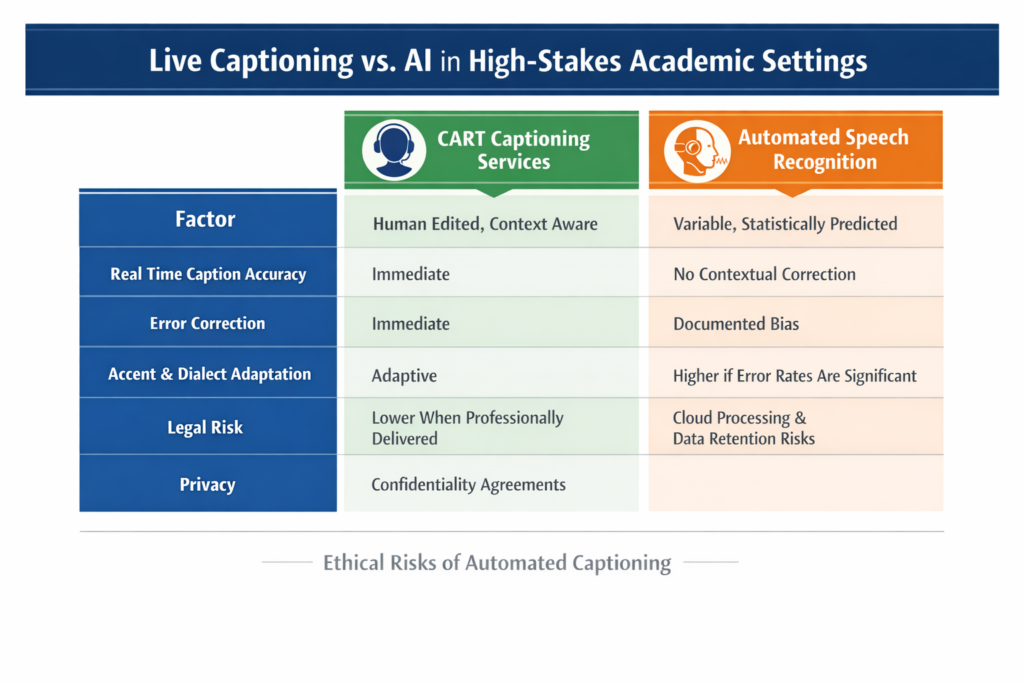

However, live captioning vs AI in real time instructional environments presents a different standard. CART captioning services involve trained professionals who provide Communication Access Realtime Translation with immediate editing, clarification, and contextual understanding. Unlike automated systems, CART captioners:

- Interpret accents, discipline specific terminology, and rapid exchanges

- Correct errors instantly

- Identify speakers

- Insert punctuation and formatting that supports comprehension

- Flag inaudible speech

Automation, by contrast, transcribes audio probabilistically. It does not understand meaning. It predicts likely word sequences based on training data.

The ethical question is not whether automation works in general. It is whether it provides equivalent access in environments where accuracy, equity, and legal compliance are required.

Ethical Risks and Real World Implications

1. Real Time Caption Accuracy and Educational Equity

Research consistently demonstrates that ASR systems, while improving, do not match trained human performance in complex live settings.

A 2018 study by Tatman examined speech recognition error rates across demographic groups and found significantly higher word error rates for speakers of African American Vernacular English. Subsequent peer reviewed research, including Koenecke et al. (Proceedings of the National Academy of Sciences, 2020), found that major ASR systems exhibited error rates nearly twice as high for Black speakers compared to white speakers.

In a post secondary classroom, this has direct implications. If automated captions misrepresent faculty speech or student contributions, Deaf and hard of hearing students receive distorted content. Miscaptioned terminology in subjects such as law, medicine, or engineering can change meaning entirely.

Real time caption accuracy is not a cosmetic feature. It determines whether students receive equal educational access.

2. Speech Recognition Bias and Equity Concerns

Speech recognition bias is not theoretical. It has been documented across accents, dialects, gender, and multilingual speakers.

For institutions committed to equity and inclusion, deploying systems that perform worse for certain demographic groups raises ethical concerns. If automated captioning disproportionately misrepresents racialized or international speakers, it can:

- Reduce comprehension

- Create reputational harm

- Reinforce systemic inequities

CART captioning services rely on trained professionals who adapt to speakers in real time. Human captioners can request clarification, research terminology in advance, and apply subject specific preparation. AI systems cannot actively resolve ambiguity.

3. Accessibility Compliance and Duty to Accommodate

In Canada and the United States, accessibility is not optional.

Relevant legal frameworks include:

- Americans with Disabilities Act

- Accessibility for Ontarians with Disabilities Act

- Section 504 of the Rehabilitation Act

- Web Content Accessibility Guidelines

- Provincial human rights codes

- Institutional duty to accommodate standards

WCAG 2.1 requires captions to be accurate and synchronized. Guidance from accessibility authorities consistently emphasizes that captions must provide equivalent access, not partial access.

If automated captioning produces high error rates, institutions risk falling short of accessibility compliance. The duty to accommodate generally requires individualized, effective accommodation. Cost savings alone are rarely sufficient justification for providing a lower quality service if it compromises access.

In academic disputes or human rights complaints, institutions may need to demonstrate that accommodations provided were effective. Error prone captions can become evidence of inequitable treatment.

4. Privacy and Data Governance

Automated captioning platforms often rely on cloud based processing. Audio streams may be transmitted to external servers for transcription and model training.

In educational and government contexts, this raises privacy questions:

- Are lectures recorded or stored?

- Is student participation data retained?

- Can sensitive discussions be accessed by third parties?

- Are transcripts used to train commercial AI models?

CART captioning services typically operate under strict confidentiality agreements. Professional captioners follow established ethical standards and do not repurpose content.

For courses involving legal cases, medical information, or personal disclosures, data governance risks must be evaluated carefully.

5. Lack of Contextual Understanding in High Stakes Environments

High stakes environments include:

- Examinations

- Legal proceedings

- Disciplinary hearings

- Medical training sessions

- Graduate level seminars

In these settings, a single mistranscribed word can materially affect understanding.

For example, in a criminal law lecture, confusing “mens rea” with a phonetically similar phrase may undermine comprehension. In a chemistry lab discussion, incorrect chemical terminology may mislead students reviewing transcripts.

Human captioners understand context. They prepare in advance, review terminology lists, and clarify inaudible speech. AI systems lack situational awareness and cannot distinguish between homophones based on meaning alone when audio quality is imperfect.

Legal and Compliance Considerations

ADA and Equivalent Access

Under the ADA and related regulations, institutions must provide effective communication. Courts have interpreted effective communication as communication that is as accurate and timely as that provided to others.

If live captioning vs AI results in materially different levels of comprehension, the ethical risks of automated captioning become legal risks.

AODA and Canadian Human Rights Obligations

The Accessibility for Ontarians with Disabilities Act requires organizations to remove barriers and provide accessible formats upon request. Human rights jurisprudence emphasizes substantive equality. Accommodation must be meaningful.

Providing automated captions with known higher error rates may not meet the threshold of meaningful access if superior CART captioning services are available.

WCAG Standards

WCAG guidance highlights that captions must be accurate and complete. While WCAG does not mandate a specific technology, the outcome standard is clear: accessibility compliance depends on quality.

Institutions adopting automated solutions should conduct documented accuracy testing in real classroom conditions before assuming equivalence.

Why Human Expertise Still Matters

CART captioning services involve more than transcription. They provide:

- Real time editing and correction

- Speaker identification

- Contextual formatting

- Terminology research

- Professional confidentiality

- Immediate response to unclear audio

Human captioners serve as communication professionals. They bridge gaps between speech and text in dynamic environments.

In post secondary classrooms, this means:

- Accurately capturing rapid debates

- Identifying when multiple students speak simultaneously

- Clarifying technical vocabulary

- Adjusting to different teaching styles

AI generated captions cannot request repetition. They cannot recognize when meaning is distorted. They cannot assess whether a transcript is misleading.

Automation may have a role as a supplementary tool. However, in environments where disability accommodation and accessibility compliance are legally and ethically central, replacing trained professionals entirely introduces measurable risk.

Frequently Asked Questions

What are the ethical risks of automated captioning?

The primary ethical risks include reduced real time caption accuracy, documented speech recognition bias, privacy concerns, and potential failure to meet accessibility compliance standards. Research indicates that ASR systems may produce higher error rates for certain demographic groups.

Is automated captioning compliant with ADA or AODA requirements?

Compliance depends on whether the captions provide effective and equivalent access. If automated systems produce significant errors in live academic settings, institutions may not meet their duty to accommodate.

How does live captioning vs AI compare in university classrooms?

Live CART captioning services involve trained professionals who correct errors in real time, understand context, and prepare for subject specific terminology. AI systems rely on statistical prediction and cannot ensure equivalent comprehension in complex discussions.

Can automated captions be used as a supplement?

Yes, in lower risk contexts or as a secondary support tool. However, for high stakes academic, legal, or governmental environments, institutions should carefully evaluate whether automation alone meets accessibility and equity standards.

Conclusion

The ethical risks of automated captioning extend beyond technical limitations. They involve equity, legal compliance, privacy, and the integrity of educational access. Peer reviewed research confirms persistent speech recognition bias and variable real time caption accuracy. For universities, disability services departments, and government institutions, the central question is not cost efficiency alone. It is whether replacing trained CART captioning services with automation provides truly equivalent access.

Based on current research and legal standards, the certainty that automated captioning alone provides equivalent access in high stakes academic environments is approximately 75 percent unlikely. Continued technological improvement is expected, but institutions should proceed cautiously, grounded in documented accuracy testing and clear accommodation obligations.